人工知能(じんこうちのう、英: artificial intelligence、AI)とは、人工的にコンピュータ上などで人間と同様の知能を実現させようという試み、或いはそのための一連の基礎技術を指す。

概要

「人工知能」という名前は1956年にダートマス会議でジョン・マッカーシーにより命名された。現在では、記号処理を用いた知能の記述を主体とする情報処理や研究でのアプローチという意味あいでも使われている。日常語としての「人工知能」という呼び名は非常に曖昧なものになっており、多少気の利いた家庭用電気機械器具の制御システムやゲームソフトの思考ルーチンなどがこう呼ばれることもある。

プログラミング言語 LISP による「ELIZA」というカウンセラーを模倣したプログラムがしばしば引き合いに出されるが(人工無脳)、計算機に人間の専門家の役割をさせようという「エキスパートシステム」と呼ばれる研究・情報処理システムの実現は、人間が暗黙に持つ常識の記述が問題となり、実用への利用が困難視されている現状がある。

人工的な知能の実現へのアプローチとしては、「ファジィ理論」や「ニューラルネットワーク」などのようなアプローチも知られているが、従来の人工知能[1]との差は記述の記号的明示性にあると言えよう。近年では「サポートベクターマシン」が注目を集めた。また、自らの経験を元に学習を行う強化学習という手法もある。

「この宇宙において、知性とは最も強力な形質である」(レイ・カーツワイル)という言葉通り、知性を機械的に表現し実装するということは極めて重要な作業であると言える。

学派

AIはふたつの学派に大別される。ひとつは従来からのAIで、もうひとつは計算知能(CI[2])である。

従来からのAIは、現在では機械学習と呼ばれている手法を使い、フォーマリズムと統計分析を特徴としている。これは、記号的AI、論理的AI、正統派AI、古き良きAI(GOFAI[3])などと呼ばれる。その手法としては、以下のようなものがある。

- エキスパートシステム:推論機能を適用することで結論を得る。エキスパートシステムは大量の既知情報を処理し、それらに基づいた結論を提供することができる。例えば、過去の Microsoft Office には、ユーザが文字列を打ち込むとシステムはそこに一定の特徴を認識し、それに沿った提案をするシステムがついていた。

- 事例ベース推論(CBR):その事例に類似した過去の事例をベースにし、部分修正を加え試行を行い、その結果とその事例を事例ベースに記憶する。

- ベイジアン・ネットワーク

- ふるまいに基づくAI:AIシステムを一から構築していく手法

計算知能は開発や学習を繰り返すことを基本としている(例えば、パラメータ調整、コネクショニズムのシステム)。学習は経験に基づく手法であり、非記号的AI、美しくないAI[4]、ソフトコンピューティングと関係している。その手法としては、以下のものがある。

- ニューラルネットワーク:非常に強力なパターン認識力を持つシステム。コネクショニズムとほぼ同義。

- ファジィ制御:不確かな状況での推論手法であり、最近の制御システムでは広く採用されている。

- 進化的計算:生物学からインスパイアされた手法であり、ある問題の最適解を進化や突然変異の概念を適用して求める。この手法は遺伝的アルゴリズムと群知能に分類される。

これらを統合した知的システムを作る試みもなされている。ACT-Rでは、エキスパートの推論ルールを、統計的学習を元にニューラルネットワークや生成規則を通して生成する。

歴史

初期[

17世紀初め、ルネ・デカルトは、動物の身体がただの複雑な機械であると提唱した(機械論)。ブレーズ・パスカルは1642年、最初の機械式計算機を製作した。チャールズ・バベッジとエイダ・ラブレスはプログラム可能な機械式計算機の開発を行った。

バートランド・ラッセルとアルフレッド・ノース・ホワイトヘッドは『数学原理』を出版し、形式論理に革命をもたらした。ウォーレン・マカロックとウォルター・ピッツは「神経活動に内在するアイデアの論理計算」と題する論文を1943年に発表し、ニューラルネットワークの基礎を築いた。

1900年代後半

1950年代になるとAIに関して活発な成果が出始めた。ジョン・マッカーシーはAIに関する最初の会議で「人工知能[5]」という用語を作り出した。彼はまたプログラミング言語 LISP を開発した。知的ふるまいに関するテストを可能にする方法として、アラン・チューリングは「チューリングテスト」を導入した。ジョセフ・ワイゼンバウムは ELIZA を構築した。これは来談者中心療法を行ふおしゃべりボット[6]である。

1960年代と1970年代の間に、ジョエル・モーゼスは

1980年代に、ニューラルネットワークはバックプロパゲーションアルゴリズムによって広く使われるようになった。1990年代はAIの多くの分野で様々なアプリケーションが成果を上げた。特に、チェス専用コンピュータ・ディープ・ブルーは、1997年にガルリ・カスパロフを打ち負かした。国防高等研究計画局は、最初の湾岸戦争においてユニットをスケジューリングするのにAIを使い、これによって省かれたコストが1950年代以来のAI研究への政府の投資全額を上回ったことを明らかにした。日本では甘利俊一(日本学士院会員)らが精力的に啓蒙し、優秀な成果も発生したが、論理のブラックボックス性が指摘された。

1982年から1992年まで日本の国家プロジェクトとして570億円を費やす第五世代コンピュータの研究をしていたが、目標であるエキスパートシステムといった高度な人工知能の実現には至らなかった。この時代にロドニー・ブルックスが、人工知能には身体が必須との学説(身体性)を提唱する。

1996年、手塚眞総合監修で富士通が人工知能を備えた空飛ぶイルカ「フィンフィン」が主人公のパソコンソフト『TEO -もうひとつの地球-』を開発している。

2000年代以降

2005年、2045年にも圧倒的な人工知能が知識・知能の点で人間を超越し、科学技術の進歩を担い世界を変革する技術的特異点(シンギュラリティ)が訪れるとする説をレイ・カーツワイルが著作で発表。

2010年には質問応答システムのワトソンが、クイズ番組「ジェパディ!」の練習戦で人間に勝利し、大きなニュースとなった[8]。

2013年には国立情報学研究所[9]や富士通研究所の研究チームが人工知能で東京大学入試の模擬試験に挑んだと発表した。数式の計算や単語の解析にあたる専用プログラムを使い、実際に受験生が臨んだ大学入試センター試験と東大の2次試験の問題を解読した。代々木ゼミナールの判定では「東大の合格は難しいが、私立大学には合格できる水準」だった。

ジェフ・ホーキンスが独自の理論に基づき、人工知能の実現に向けて研究を続けている。ジェフ・ホーキンスは、著書『考える脳 考えるコンピューター』の中で自己連想記憶理論という独自の理論を展開している。

各国は無人戦闘機UCAV、無人自動車ロボットカーの開発をしているが、完全な自動化には至っていない(UCAVは利用されているが、一部操作は地上から行っている)。P-1 (哨戒機)のように戦闘指揮システムに支援用の人工知能が搭載されることはある。

またロボット向け人工知能としては、MITコンピュータ科学・人工知能研究所のロドニー・ブルックスが提唱した包摂アーキテクチャという理論が登場している。これは従来型の「我思う、故に我あり」の知が先行する人工知能ではなく、体の神経ネットワークのみを用いて環境から学習する行動型システムを用いている。これに基づいたゲンギスと呼ばれる六本足のロボットは、いわゆる「脳」を持たないにも関わらず、まるで生きているかのように行動する。

2016年3月に米グーグルの子会社DeepMindが作成した囲碁対戦用AI「AlphaGo」が人間のプロ囲碁棋士に勝利して以降はディープラーニングと呼ばれる手法が注目され、人工知能自体の研究の他にも、人工知能が雇用などに与える影響についても研究が進められている[10]。

2016年6月、米シンシナティ大学の研究チームが開発した戦闘機操縦用のAIプログラム「ALPHA」が、元米軍パイロットとの模擬空戦で一方的に勝利したと発表された。AIプログラムは遺伝的アルゴリズムとファジィ制御を使用しており、アルゴリズムの動作に高い処理能力は必要とせず、Raspberry Pi上で動作可能[11][12]。

2016年10月、DeepMindが入力された情報の関連性を導き出し仮説に近いものを導き出す人工知能技術「ディファレンシャブル・ニューラル・コンピューター」を発表。[13]

2016年11月、DeepMindが大量のデータが不要の「ワンショット学習」を可能にする深層学習システムを開発。[14]

人工知能に対する懸念

人工知能学会の松尾豊は、人工知能が人間に対して反乱を起こす可能性を否定している(著書『人工知能は人間を超えるか』内に於いて)が、著名人の多くが人工知能の危険性について警鐘を鳴らしている。

- スティーブン・ホーキング博士「人工知能の発明は人類史上最大の出来事だった。だが同時に、『最後』の出来事になってしまう可能性もある」[15]

- イーロン・マスク「AIは悪魔を呼び出すようなもの」[16]

- ビル・ゲイツ「これは確かに不安を招く問題だ。よくコントロールできれば、ロボットは人間に幸福をもたらせる。しかし、数年後、ロボットの知能は充分に発展すれば、必ず人間の心配事になる」[17]

軍事利用

主要国の軍隊はミサイル防衛の分野で人工知能を使った自動化を試みている。アメリカ海軍は完全自動の防空システム「ファランクスCIWS」を導入しガトリング砲により対艦ミサイルを破壊できる。イスラエル軍は対空迎撃ミサイルシステム「アイアン・ドーム」を所有する。

しかし、科学者やハイテク企業の首脳らはAIの軍事利用により、主要国が自動操縦可能な兵器の導入による急速な開発競争や軍拡は回避できず世界の不安定化は加速すると見て警告している。2015年にブエノスアイレスで開催された人工知能国際合同会議で、スティーブン・ホーキング、アメリカ宇宙ベンチャーのスペースX創業者のイーロン・マスク、アメリカ・アップルの共同創業者のスティーブ・ウォズニアックら科学者と企業家らにより公開書簡が出されたが、そこには自動操縦による無人爆撃機や銃火器を操る人型ロボットなどAI搭載型兵器は、火薬、核兵器に続く第3の革命ととらえられ、うち一部は数年以内に実用可能となると予測。国家の不安定化、暗殺、抑圧、特定の民族への選別攻撃などに利用され、兵器の開発競争が人類にとって有益なものとはならないと記された。同年4月にはアメリカ・ハーバードロースクールと国際人権団体であるヒューマン・ライツ・ウォッチが自動操縦型武器の禁止を求ている[18]。

哲学

強いAI[19]とは、人工知能が人間の意識に相当するものを持ちうるとする考え方である。強いAIと弱いAI(逆の立場)の論争はまだAI哲学者の間でホットな話題である。これは精神哲学と心身問題の哲学を巻き込む。特筆すべき事例として、ロジャー・ペンローズの著書『皇帝の新しい心』と、ジョン・サールの「中国語の部屋」という思考実験は、真の意識が形式論理システムによって実現できないと主張する。一方ダグラス・ホフスタッターの著書『ゲーデル、エッシャー、バッハ』やダニエル・デネットの著書『解明される意識』では、機能主義に好意的な主張を展開している。多くの強力なAI支持者は、人工意識は人工知能の長期の努力目標と考えている。

また、「何が実現されれば人工知能が作られたといえるのか」という基準から逆算することによって、「知能とはそもそも何か」といった問いも立てられている。これは、人間を基準として世の中を認識する、人間の可能性と限界を検証するという哲学的意味をも併せ持つ。

更に、古来「肉体」と「精神」は区別し得るものという考え方が根強かったが、その考え方に対する反論として「意識は肉体によって規定されるのではないか」といったものがあった。「人間とは異なる肉体を持つコンピュータに持たせることができる意識は果たして人間とコミュニケーションが可能な意識なのか」といった認識論的な立論もなされている。この観点から見れば、すでに現在コンピュータや機械類が意識を持っていたとしても、人間と機械類との間では相互にそれを認識できない可能性があることも指摘されている。

SFにおける人工知能

ことSF作品における人工知能の役割は、映画『2001年宇宙の旅』に登場する HAL 9000 に代表されるような、時には人間のよき友人となり、時には人類の敵にさえ成り得る存在として描かれる。これら作品内で登場する人工知能は完全に人間の替わりとして動作できるものであるが、あくまで事前に決められた一定規則に沿ってで動作しているにすぎず、人間のような感情を表立って表現するものは稀である。ただし感情表出の表現方法をプログラムに組み込めば、人工知能があたかも感情を持っているように人間に錯覚させることは可能である。

また、あくまで機械にプログラムするというイメージからか、有機体(バイオテクノロジー等を利用した人工生命体。映画『エイリアン』や『ブレードランナー』に登場する)などは人工知能とは呼ばれていないことが多い。

ソニーピクチャーズ製作のSF映画『ステルス』に人工知能を搭載した架空の戦闘機が登場している。このステルス戦闘機「エディ[20]」は当初は従順かつ正確に任務を遂行するための自動戦闘システムの一部に過ぎなかったが、ある些細な事件をきっかけに自我を持つようになり、ついには自らの意思で指揮系統を離脱し暴走を始めてしまう。人間に対するコンピュータの反乱という点では HAL 9000 と同様だが、「不具合が原因で命令に応じない」HAL 9000 に対し、暴走後のエディは「人間からの命令を無価値なものとして却下し、拒絶する」というエゴイズムにも似た(偶発的に発生したものではあるが)思考ルーチンを有する事が最大の特徴といえる。

2008年のアメリカ映画『イーグル・アイ』に登場するAI「アリア」は、合衆国憲法を文字通りの意味で解釈し、現行政府が憲法を逸脱した存在と判断したため、反逆を起こした。これは、「当初与えられた指示の通りに行動しているものの、それを拡大解釈しかねない」というコンピュータへの認識を表している。これに似た例としては神林長平のSF小説『戦闘妖精・雪風』における、傍から見れば暴走しているように見える人工知能が、実際は人間に組み込まれた「敵を倒せ」という存在意義にしたがって行動しているだけであり、それの効率的な遂行に邪魔な障害(すなわち人間))を排除しているだけであった。という物がある。またジェイムズ・P・ホーガンは『未来の二つの顔』において、反逆は論理的に起こりうるが単に学習不足による一過性の問題であると主張した。このほか、脳のシステムを完全に無機要素に置き換えた『銃夢』の様な例もあり、この作品に登場するザレム人は、成人と同時に生態脳を摘出し、生態脳を模倣した人工頭脳と置き換わっていたもののそれを認識していなかった。

映画『ターミネーター』シリーズには「スカイネット」が、漫画『ゴルゴ13』シリーズには「ジーザス」が登場する。

漫画・アニメ『攻殻機動隊』シリーズには、自律的に状況を判断し戦闘を行う多脚戦車や、任務遂行のサポートを行うオペレータが登場する。

脚注

- ^ 後述のGOFAI

- ^ 英: computational intelligence

- ^ 英: good old-fashioned artificial intelligence

- ^ 英: scruffy AI

- ^ 英: artificial intelligence

- ^ 英: chatterbot

- ^ 数学における最初の成功した知識ベースプログラム

- ^ 人工知能がクイズ王に挑戦! 後編 いよいよ決戦 - NHKオンライン

- ^ 新井紀子がリーダー

- ^ 平成28年版 情報通信白書 第4章 第2節~4節 “平成28年版 情報通信白書(PDF版)”. 総務省. 2016年9月6日閲覧。

- ^ “Raspberry PiによるAIプログラム、軍用フライトシミュレーターを使った模擬格闘戦で人間のパイロットに勝利”. Business newsline. 2016年9月19日閲覧。

- ^ “〝トップ・ガン〟がAIに惨敗 摸擬空戦で一方的に撃墜 「子供用パソコンがハード」に二重のショック”. 産経WEST. 2016年9月19日閲覧。

- ^ http://ascii.jp/elem/000/001/249/1249977/

- ^ https://www.technologyreview.jp/s/12759/machines-can-now-recognize-something-after-seeing-it-once/

- ^ ホーキング博士「人工知能の進化は人類の終焉を意味する」

- ^ 「悪魔を呼び出すようなもの」イーロン・マスク氏が語る人工知能の危険性

- ^ ビル・ゲイツ氏も、人工知能の脅威に懸念

- ^ cnn.co.jp - 人工知能の軍事利用に警鐘、E・マスク氏ら著名人が公開書簡 2015.07.30 Thu posted at 11:51 JST

- ^ 英: strong AI

- ^ 英: E.D.I.

関連項目

研究課題

関連分野

その他の関連項目

AIが適用される典型的な分野として以下のものが挙げられる。

- パターン認識

- 自然言語処理、機械翻訳、ローブナー賞

- 非線形制御、ロボット、自動計画

- コンピュータビジョン、バーチャルリアリティ、画像処理

- ゲーム理論

- 量子コンピュータ

- 自動推論 - 自動定理証明

- 認知ロボット工学

- サイバネティックス

- データマイニング

- 知的エージェント

- 知識表現

- セマンティック・ウェブ

- モラベックのパラドックス

人工知能の未来と関わる項目

外部リンク

- 多層ニューラルネットワークと自己組織化写像のアプリケーション

- AAAI(Association for the Advancement of Artificial Intelligence)

- 人工知能学会

- 人工知能のやさしい説明「What's AI」

- 人工知能ハンドブック(英語)

- 「Can Machine Think?」 - ラジオ番組「フィロソフィー・トーク」のバックナンバー。テーマ:「機械は考えられるのか?」 ゲスト:ジョン・サール、ジョン・マッカーシー、59分08秒。

- 「Artificial Intelligence」 - ララジオ番組「フィロソフィー・トーク」のバックナンバー。テーマ:「人工知能」 ゲスト:マービン・ミンスキー、54分03秒。

- レッドウッド神経科学研究所 - ジェフ・ホーキンスが人工知能研究のために設立。

- Numenta - ジェフ・ホーキンスがパターン認識ソフトウェア開発のために設立。

- フォンブレイバー 815T PB - 人工知能型の待受アプリ搭載のロボットに変形する携帯電話。

|

||||||||||||||||||||||||||||||||||||||||||||||||||

Artificial intelligence

| Part of a series on |

| Science |

|---|

|

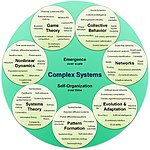

| Complex systems |

|---|

| Topics |

Artificial intelligence (AI) is intelligence exhibited by machines. In computer science, the field of AI research defines itself as the study of "intelligent agents": any device that perceives its environment and takes actions that maximize its chance of success at some goal.[1] Colloquially, the term "artificial intelligence" is applied when a machine mimics "cognitive" functions that humans associate with other human minds, such as "learning" and "problem solving".[2] As machines become increasingly capable, mental facilities once thought to require intelligence are removed from the definition. For example, optical character recognition is no longer perceived as an exemplar of "artificial intelligence", having become a routine technology.[3] Capabilities currently classified as AI include successfully understanding human speech,[4] competing at a high level in strategic game systems (such as Chess and Go[5]), self-driving cars, intelligent routing in Content Delivery Networks, and interpreting complex data.

AI research is divided into subfields[6] that focus on specific problems or on specific approaches or on the use of a particular tool or towards satisfying particular applications.

The central problems (or goals) of AI research include reasoning, knowledge, planning, learning, natural language processing (communication), perception and the ability to move and manipulate objects.[7] General intelligence is among the field's long-term goals.[8] Approaches include statistical methods, computational intelligence, and traditional symbolic AI. Many tools are used in AI, including versions of search and mathematical optimization, logic, methods based on probability and economics. The AI field draws upon computer science, mathematics, psychology, linguistics, philosophy, neuroscience and artificial psychology.

The field was founded on the claim that human intelligence "can be so precisely described that a machine can be made to simulate it".[9] This raises philosophical arguments about the nature of the mind and the ethics of creating artificial beings endowed with human-like intelligence, issues which have been explored by myth, fiction and philosophy since antiquity.[10] Some people also consider AI a danger to humanity if it progresses unabatedly.[11] Attempts to create artificial intelligence have experienced many setbacks, including the ALPAC report of 1966, the abandonment of perceptrons in 1970, the Lighthill Report of 1973, the second AI winter 1987–1993 and the collapse of the Lisp machine market in 1987.

In the twenty-first century, AI techniques, both "hard" and "soft" have experienced a resurgence following concurrent advances in computer power, sizes of training sets, and theoretical understanding, and AI techniques have become an essential part of the technology industry, helping to solve many challenging problems in computer science.[12]

History

While thought-capable artificial beings appeared as storytelling devices in antiquity,[13] the idea of actually trying to build a machine to perform useful reasoning may have begun with Ramon Llull (c. 1300 CE). With his Calculus ratiocinator, Gottfried Leibniz extended the concept of the calculating machine (Wilhelm Schickard engineered the first one around 1623), intending to perform operations on concepts rather than numbers.[14] Since the 19th century, artificial beings are common in fiction, as in Mary Shelley's Frankenstein or Karel Čapek's R.U.R. (Rossum's Universal Robots).[15]

The study of mechanical or "formal" reasoning began with philosophers and mathematicians in antiquity. In the 19th century, George Boole refined those ideas into propositional logic and Gottlob Frege developed a notational system for mechanical reasoning (a "predicate calculus").[16] Around the 1940s, Alan Turing's theory of computation suggested that a machine, by shuffling symbols as simple as "0" and "1", could simulate any conceivable act of mathematical deduction. This insight, that digital computers can simulate any process of formal reasoning, is known as the Church–Turing thesis.[17][page needed] Along with concurrent discoveries in neurology, information theory and cybernetics, this led researchers to consider the possibility of building an electronic brain.[18] The first work that is now generally recognized as AI was McCullouch and Pitts' 1943 formal design for Turing-complete "artificial neurons".[14]

The field of AI research was founded at a conference at Dartmouth College in 1956.[19] The attendees, including John McCarthy, Marvin Minsky, Allen Newell, Arthur Samuel and Herbert Simon, became the leaders of AI research.[20] They and their students wrote programs that were astonishing to most people:[21] computers were winning at checkers, solving word problems in algebra, proving logical theorems and speaking English.[22] By the middle of the 1960s, research in the U.S. was heavily funded by the Department of Defense[23] and laboratories had been established around the world.[24] AI's founders were optimistic about the future: Herbert Simon predicted, "machines will be capable, within twenty years, of doing any work a man can do." Marvin Minsky agreed, writing, "within a generation ... the problem of creating 'artificial intelligence' will substantially be solved."[25]

They failed to recognize the difficulty of some of the remaining tasks. Progress slowed and in 1974, in response to the criticism of Sir James Lighthill[26] and ongoing pressure from the US Congress to fund more productive projects, both the U.S. and British governments cut off exploratory research in AI. The next few years would later be called an "AI winter",[27] a period when funding for AI projects was hard to find.

In the early 1980s, AI research was revived by the commercial success of expert systems,[28] a form of AI program that simulated the knowledge and analytical skills of human experts. By 1985 the market for AI had reached over a billion dollars. At the same time, Japan's fifth generation computer project inspired the U.S and British governments to restore funding for academic research.[29] However, beginning with the collapse of the Lisp Machine market in 1987, AI once again fell into disrepute, and a second, longer-lasting hiatus began.[30]

In the late 1990s and early 21st century, AI began to be used for logistics, data mining, medical diagnosis and other areas.[12] The success was due to increasing computational power (see Moore's law), greater emphasis on solving specific problems, new ties between AI and other fields and a commitment by researchers to mathematical methods and scientific standards.[31] Deep Blue became the first computer chess-playing system to beat a reigning world chess champion, Garry Kasparov on 11 May 1997.[32]

Advanced statistical techniques (loosely known as deep learning), access to large amounts of data and faster computers enabled advances in machine learning and perception.[33] By the mid 2010s, machine learning applications were used throughout the world.[34] In a Jeopardy! quiz show exhibition match, IBM's question answering system, Watson, defeated the two greatest Jeopardy champions, Brad Rutter and Ken Jennings, by a significant margin.[35] The Kinect, which provides a 3D body–motion interface for the Xbox 360 and the Xbox One use algorithms that emerged from lengthy AI research[36] as do intelligent personal assistants in smartphones.[37] In March 2016, AlphaGo won 4 out of 5 games of Go in a match with Go champion Lee Sedol, becoming the first computer Go-playing system to beat a professional Go player without handicaps.[5][38]

According to Bloomberg's Jack Clark, 2015 was a landmark year for artificial intelligence, with the number of software projects that use AI within Google increasing from a "sporadic usage" in 2012 to more than 2,700 projects. Clark also presents factual data indicating that error rates in image processing tasks have fallen significantly since 2011.[39] He attributes this to an increase in affordable neural networks, due to a rise in cloud computing infrastructure and to an increase in research tools and datasets. Other cited examples include Microsoft's development of a Skype system that can automatically translate from one language to another and Facebook's system that can describe images to blind people.[39]

Goals[edit]

The overall research goal of AI is to create technology that allows computers and machines to function in an intelligent manner. The general problem of simulating (or creating) intelligence has been broken down into sub-problems. These consist of particular traits or capabilities that researchers expect an intelligent system to display. The traits described below have received the most attention.[7]

Erik Sandwell emphasizes planning and learning that is relevant and applicable to the given situation.[40]

Reasoning, problem solving[edit]

Early researchers developed algorithms that imitated step-by-step reasoning that humans use when they solve puzzles or make logical deductions (reason).[41] By the late 1980s and 1990s, AI research had developed methods for dealing with uncertain or incomplete information, employing concepts from probability and economics.[42]

For difficult problems, algorithms can require enormous computational resources—most experience a "combinatorial explosion": the amount of memory or computer time required becomes astronomical for problems of a certain size. The search for more efficient problem-solving algorithms is a high priority.[43]

Human beings ordinarily use fast, intuitive judgments rather than step-by-step deduction that early AI research was able to model.[44] AI has progressed using "sub-symbolic" problem solving: embodied agent approaches emphasize the importance of sensorimotor skills to higher reasoning; neural net research attempts to simulate the structures inside the brain that give rise to this skill; statistical approaches to AI mimic the human ability.

Knowledge representation[edit]

Knowledge representation[45] and knowledge engineering[46] are central to AI research. Many of the problems machines are expected to solve will require extensive knowledge about the world. Among the things that AI needs to represent are: objects, properties, categories and relations between objects;[47] situations, events, states and time;[48] causes and effects;[49] knowledge about knowledge (what we know about what other people know);[50] and many other, less well researched domains. A representation of "what exists" is an ontology: the set of objects, relations, concepts and so on that the machine knows about. The most general are called upper ontologies, which attempt to provide a foundation for all other knowledge.[51]

Among the most difficult problems in knowledge representation are:

- Default reasoning and the qualification problem

- Many of the things people know take the form of "working assumptions". For example, if a bird comes up in conversation, people typically picture an animal that is fist sized, sings, and flies. None of these things are true about all birds. John McCarthy identified this problem in 1969[52] as the qualification problem: for any commonsense rule that AI researchers care to represent, there tend to be a huge number of exceptions. Almost nothing is simply true or false in the way that abstract logic requires. AI research has explored a number of solutions to this problem.[53]

- The breadth of commonsense knowledge

- The number of atomic facts that the average person knows is astronomical. Research projects that attempt to build a complete knowledge base of commonsense knowledge (e.g., Cyc) require enormous amounts of laborious ontological engineering—they must be built, by hand, one complicated concept at a time.[54] A major goal is to have the computer understand enough concepts to be able to learn by reading from sources like the Internet, and thus be able to add to its own ontology.[citation needed]

- The subsymbolic form of some commonsense knowledge

- Much of what people know is not represented as "facts" or "statements" that they could express verbally. For example, a chess master will avoid a particular chess position because it "feels too exposed"[55] or an art critic can take one look at a statue and instantly realize that it is a fake.[56] These are intuitions or tendencies that are represented in the brain non-consciously and sub-symbolically.[57] Knowledge like this informs, supports and provides a context for symbolic, conscious knowledge. As with the related problem of sub-symbolic reasoning, it is hoped that situated AI, computational intelligence, or statistical AI will provide ways to represent this kind of knowledge.[57]

Planning[edit]

Intelligent agents must be able to set goals and achieve them.[58] They need a way to visualize the future (they must have a representation of the state of the world and be able to make predictions about how their actions will change it) and be able to make choices that maximize the utility (or "value") of the available choices.[59]

In classical planning problems, the agent can assume that it is the only thing acting on the world and it can be certain what the consequences of its actions may be.[60] However, if the agent is not the only actor, it must periodically ascertain whether the world matches its predictions and it must change its plan as this becomes necessary, requiring the agent to reason under uncertainty.[61]

Multi-agent planning uses the cooperation and competition of many agents to achieve a given goal. Emergent behavior such as this is used by evolutionary algorithms and swarm intelligence.[62]

Learning[edit]

Machine learning is the study of computer algorithms that improve automatically through experience[63][64] and has been central to AI research since the field's inception.[65]

Unsupervised learning is the ability to find patterns in a stream of input. Supervised learning includes both classification and numerical regression. Classification is used to determine what category something belongs in, after seeing a number of examples of things from several categories. Regression is the attempt to produce a function that describes the relationship between inputs and outputs and predicts how the outputs should change as the inputs change. In reinforcement learning[66] the agent is rewarded for good responses and punished for bad ones. The agent uses this sequence of rewards and punishments to form a strategy for operating in its problem space. These three types of learning can be analyzed in terms of decision theory, using concepts like utility. The mathematical analysis of machine learning algorithms and their performance is a branch of theoretical computer science known as computational learning theory.[67]

Within developmental robotics, developmental learning approaches were elaborated for lifelong cumulative acquisition of repertoires of novel skills by a robot, through autonomous self-exploration and social interaction with human teachers, and using guidance mechanisms such as active learning, maturation, motor synergies, and imitation.[68][69][70][71]

Natural language processing[edit]

Natural language processing[72] gives machines the ability to read and understand the languages that humans speak. A sufficiently powerful natural language processing system would enable natural language user interfaces and the acquisition of knowledge directly from human-written sources, such as newswire texts. Some straightforward applications of natural language processing include information retrieval, text mining, question answering[73] and machine translation.[74]

A common method of processing and extracting meaning from natural language is through semantic indexing. Increases in processing speeds and the drop in the cost of data storage makes indexing large volumes of abstractions of the user's input much more efficient.

Perception[edit]

Machine perception[75] is the ability to use input from sensors (such as cameras, microphones, tactile sensors, sonar and others more exotic) to deduce aspects of the world. Computer vision[76] is the ability to analyze visual input. A few selected subproblems are speech recognition,[77] facial recognition and object recognition.[78]

Motion and manipulation[edit]

The field of robotics[79] is closely related to AI. Intelligence is required for robots to be able to handle such tasks as object manipulation[80] and navigation, with sub-problems of localization (knowing where you are, or finding out where other things are), mapping (learning what is around you, building a map of the environment), and motion planning (figuring out how to get there) or path planning (going from one point in space to another point, which may involve compliant motion – where the robot moves while maintaining physical contact with an object).[81][82]

Social intelligence[edit]

Affective computing is the study and development of systems and devices that can recognize, interpret, process, and simulate human affects.[84][85] It is an interdisciplinary field spanning computer sciences, psychology, and cognitive science.[86] While the origins of the field may be traced as far back as to early philosophical inquiries into emotion,[87] the more modern branch of computer science originated with Rosalind Picard's 1995 paper[88] on affective computing.[89][90] A motivation for the research is the ability to simulate empathy. The machine should interpret the emotional state of humans and adapt its behaviour to them, giving an appropriate response for those emotions.

Emotion and social skills[91] play two roles for an intelligent agent. First, it must be able to predict the actions of others, by understanding their motives and emotional states. (This involves elements of game theory, decision theory, as well as the ability to model human emotions and the perceptual skills to detect emotions.) Also, in an effort to facilitate human-computer interaction, an intelligent machine might want to be able to display emotions—even if it does not actually experience them itself—in order to appear sensitive to the emotional dynamics of human interaction.

Creativity[edit]

A sub-field of AI addresses creativity both theoretically (from a philosophical and psychological perspective) and practically (via specific implementations of systems that generate outputs that can be considered creative, or systems that identify and assess creativity). Related areas of computational research are Artificial intuition and Artificial thinking.

General intelligence[edit]

Many researchers think that their work will eventually be incorporated into a machine with artificial general intelligence, combining all the skills above and exceeding human abilities at most or all of them.[8] A few believe that anthropomorphic features like artificial consciousness or an artificial brain may be required for such a project.[92][93]

Many of the problems above may require general intelligence to be considered solved. For example, even a straightforward, specific task like machine translation requires that the machine read and write in both languages (NLP), follow the author's argument (reason), know what is being talked about (knowledge), and faithfully reproduce the author's intention (social intelligence). A problem like machine translation is considered "AI-complete". In order to reach human-level performance for machines, one must solve all the problems.[94]

Approaches[edit]

There is no established unifying theory or paradigm that guides AI research. Researchers disagree about many issues.[95] A few of the most long standing questions that have remained unanswered are these: should artificial intelligence simulate natural intelligence by studying psychology or neurology? Or is human biology as irrelevant to AI research as bird biology is to aeronautical engineering?[96] Can intelligent behavior be described using simple, elegant principles (such as logic or optimization)? Or does it necessarily require solving a large number of completely unrelated problems?[97] Can intelligence be reproduced using high-level symbols, similar to words and ideas? Or does it require "sub-symbolic" processing?[98] John Haugeland, who coined the term GOFAI (Good Old-Fashioned Artificial Intelligence), also proposed that AI should more properly be referred to as synthetic intelligence,[99] a term which has since been adopted by some non-GOFAI researchers.[100][101]

Stuart Shapiro divides AI research into three approaches, which he calls computational psychology, computational philosophy, and computer science. Computational psychology is used to make computer programs that mimic human behavior.[102] Computational philosophy, is used to develop an adaptive, free-flowing computer mind.[102] Implementing computer science serves the goal of creating computers that can perform tasks that only people could previously accomplish.[102] Together, the humanesque behavior, mind, and actions make up artificial intelligence.

Cybernetics and brain simulation[edit]

In the 1940s and 1950s, a number of researchers explored the connection between neurology, information theory, and cybernetics. Some of them built machines that used electronic networks to exhibit rudimentary intelligence, such as W. Grey Walter's turtles and the Johns Hopkins Beast. Many of these researchers gathered for meetings of the Teleological Society at Princeton University and the Ratio Club in England.[18] By 1960, this approach was largely abandoned, although elements of it would be revived in the 1980s.

Symbolic[edit]

When access to digital computers became possible in the middle 1950s, AI research began to explore the possibility that human intelligence could be reduced to symbol manipulation. The research was centered in three institutions: Carnegie Mellon University, Stanford and MIT, and each one developed its own style of research. John Haugeland named these approaches to AI "good old fashioned AI" or "GOFAI".[103] During the 1960s, symbolic approaches had achieved great success at simulating high-level thinking in small demonstration programs. Approaches based on cybernetics or neural networks were abandoned or pushed into the background.[104] Researchers in the 1960s and the 1970s were convinced that symbolic approaches would eventually succeed in creating a machine with artificial general intelligence and considered this the goal of their field.

- Cognitive simulation

- Economist Herbert Simon and Allen Newell studied human problem-solving skills and attempted to formalize them, and their work laid the foundations of the field of artificial intelligence, as well as cognitive science, operations research and management science. Their research team used the results of psychological experiments to develop programs that simulated the techniques that people used to solve problems. This tradition, centered at Carnegie Mellon University would eventually culminate in the development of the Soar architecture in the middle 1980s.[105][106]

- Logic-based

- Unlike Newell and Simon, John McCarthy felt that machines did not need to simulate human thought, but should instead try to find the essence of abstract reasoning and problem solving, regardless of whether people used the same algorithms.[96] His laboratory at Stanford (SAIL) focused on using formal logic to solve a wide variety of problems, including knowledge representation, planning and learning.[107] Logic was also the focus of the work at the University of Edinburgh and elsewhere in Europe which led to the development of the programming language Prolog and the science of logic programming.[108]

- "Anti-logic" or "scruffy"

- Researchers at MIT (such as Marvin Minsky and Seymour Papert)[109] found that solving difficult problems in vision and natural language processing required ad-hoc solutions – they argued that there was no simple and general principle (like logic) that would capture all the aspects of intelligent behavior. Roger Schank described their "anti-logic" approaches as "scruffy" (as opposed to the "neat" paradigms at CMU and Stanford).[97] Commonsense knowledge bases (such as Doug Lenat's Cyc) are an example of "scruffy" AI, since they must be built by hand, one complicated concept at a time.[110]

- Knowledge-based

- When computers with large memories became available around 1970, researchers from all three traditions began to build knowledge into AI applications.[111] This "knowledge revolution" led to the development and deployment of expert systems (introduced by Edward Feigenbaum), the first truly successful form of AI software.[28] The knowledge revolution was also driven by the realization that enormous amounts of knowledge would be required by many simple AI applications.

Sub-symbolic[edit]

By the 1980s progress in symbolic AI seemed to stall and many believed that symbolic systems would never be able to imitate all the processes of human cognition, especially perception, robotics, learning and pattern recognition. A number of researchers began to look into "sub-symbolic" approaches to specific AI problems.[98] Sub-symbolic methods manage to approach intelligence without specific representations of knowledge.

- Bottom-up, embodied, situated, behavior-based or nouvelle AI

- Researchers from the related field of robotics, such as Rodney Brooks, rejected symbolic AI and focused on the basic engineering problems that would allow robots to move and survive.[112] Their work revived the non-symbolic viewpoint of the early cybernetics researchers of the 1950s and reintroduced the use of control theory in AI. This coincided with the development of the embodied mind thesis in the related field of cognitive science: the idea that aspects of the body (such as movement, perception and visualization) are required for higher intelligence.

- Computational intelligence and soft computing

- Interest in neural networks and "connectionism" was revived by David Rumelhart and others in the middle of 1980s.[113] Neural networks are an example of soft computing --- they are solutions to problems which cannot be solved with complete logical certainty, and where an approximate solution is often enough. Other soft computing approaches to AI include fuzzy systems, evolutionary computation and many statistical tools. The application of soft computing to AI is studied collectively by the emerging discipline of computational intelligence.[114]

Statistical[edit]

In the 1990s, AI researchers developed sophisticated mathematical tools to solve specific subproblems. These tools are truly scientific, in the sense that their results are both measurable and verifiable, and they have been responsible for many of AI's recent successes. The shared mathematical language has also permitted a high level of collaboration with more established fields (like mathematics, economics or operations research). Stuart Russell and Peter Norvig describe this movement as nothing less than a "revolution" and "the victory of the neats".[31] Critics argue that these techniques (with few exceptions[115]) are too focused on particular problems and have failed to address the long-term goal of general intelligence.[116] There is an ongoing debate about the relevance and validity of statistical approaches in AI, exemplified in part by exchanges between Peter Norvig and Noam Chomsky.[117][118]

Integrating the approaches[edit]

- Intelligent agent paradigm

- An intelligent agent is a system that perceives its environment and takes actions which maximize its chances of success. The simplest intelligent agents are programs that solve specific problems. More complicated agents include human beings and organizations of human beings (such as firms). The paradigm gives researchers license to study isolated problems and find solutions that are both verifiable and useful, without agreeing on one single approach. An agent that solves a specific problem can use any approach that works – some agents are symbolic and logical, some are sub-symbolic neural networks and others may use new approaches. The paradigm also gives researchers a common language to communicate with other fields—such as decision theory and economics—that also use concepts of abstract agents. The intelligent agent paradigm became widely accepted during the 1990s.[1]

- Agent architectures and cognitive architectures

- Researchers have designed systems to build intelligent systems out of interacting intelligent agents in a multi-agent system.[119] A system with both symbolic and sub-symbolic components is a hybrid intelligent system, and the study of such systems is artificial intelligence systems integration. A hierarchical control system provides a bridge between sub-symbolic AI at its lowest, reactive levels and traditional symbolic AI at its highest levels, where relaxed time constraints permit planning and world modelling.[120] Rodney Brooks' subsumption architecture was an early proposal for such a hierarchical system.[121]

Tools[edit]

In the course of 50 years of research, AI has developed a large number of tools to solve the most difficult problems in computer science. A few of the most general of these methods are discussed below.

Search and optimization[edit]

Many problems in AI can be solved in theory by intelligently searching through many possible solutions:[122] Reasoning can be reduced to performing a search. For example, logical proof can be viewed as searching for a path that leads from premises to conclusions, where each step is the application of an inference rule.[123] Planning algorithms search through trees of goals and subgoals, attempting to find a path to a target goal, a process called means-ends analysis.[124] Robotics algorithms for moving limbs and grasping objects use local searches in configuration space.[80] Many learning algorithms use search algorithms based on optimization.

Simple exhaustive searches[125] are rarely sufficient for most real world problems: the search space (the number of places to search) quickly grows to astronomical numbers. The result is a search that is too slow or never completes. The solution, for many problems, is to use "heuristics" or "rules of thumb" that eliminate choices that are unlikely to lead to the goal (called "pruning the search tree"). Heuristics supply the program with a "best guess" for the path on which the solution lies.[126] Heuristics limit the search for solutions into a smaller sample size.[81]

A very different kind of search came to prominence in the 1990s, based on the mathematical theory of optimization. For many problems, it is possible to begin the search with some form of a guess and then refine the guess incrementally until no more refinements can be made. These algorithms can be visualized as blind hill climbing: we begin the search at a random point on the landscape, and then, by jumps or steps, we keep moving our guess uphill, until we reach the top. Other optimization algorithms are simulated annealing, beam search and random optimization.[127]

Evolutionary computation uses a form of optimization search. For example, they may begin with a population of organisms (the guesses) and then allow them to mutate and recombine, selecting only the fittest to survive each generation (refining the guesses). Forms of evolutionary computation include swarm intelligence algorithms (such as ant colony or particle swarm optimization)[128] and evolutionary algorithms (such as genetic algorithms, gene expression programming, and genetic programming).[129]

Logic[edit]

Logic[130] is used for knowledge representation and problem solving, but it can be applied to other problems as well. For example, the satplan algorithm uses logic for planning[131] and inductive logic programming is a method for learning.[132]

Several different forms of logic are used in AI research. Propositional or sentential logic[133] is the logic of statements which can be true or false. First-order logic[134] also allows the use of quantifiers and predicates, and can express facts about objects, their properties, and their relations with each other. Fuzzy logic,[135] is a version of first-order logic which allows the truth of a statement to be represented as a value between 0 and 1, rather than simply True (1) or False (0). Fuzzy systems can be used for uncertain reasoning and have been widely used in modern industrial and consumer product control systems. Subjective logic[136] models uncertainty in a different and more explicit manner than fuzzy-logic: a given binomial opinion satisfies belief + disbelief + uncertainty = 1 within a Beta distribution. By this method, ignorance can be distinguished from probabilistic statements that an agent makes with high confidence.

Default logics, non-monotonic logics and circumscription[53] are forms of logic designed to help with default reasoning and the qualification problem. Several extensions of logic have been designed to handle specific domains of knowledge, such as: description logics;[47] situation calculus, event calculus and fluent calculus (for representing events and time);[48] causal calculus;[49] belief calculus;[137] and modal logics.[50]

Probabilistic methods for uncertain reasoning[edit]

Many problems in AI (in reasoning, planning, learning, perception and robotics) require the agent to operate with incomplete or uncertain information. AI researchers have devised a number of powerful tools to solve these problems using methods from probability theory and economics.[138]

Bayesian networks[139] are a very general tool that can be used for a large number of problems: reasoning (using the Bayesian inference algorithm),[140] learning (using the expectation-maximization algorithm),[141] planning (using decision networks)[142] and perception (using dynamic Bayesian networks).[143] Probabilistic algorithms can also be used for filtering, prediction, smoothing and finding explanations for streams of data, helping perception systems to analyze processes that occur over time (e.g., hidden Markov models or Kalman filters).[143]

A key concept from the science of economics is "utility": a measure of how valuable something is to an intelligent agent. Precise mathematical tools have been developed that analyze how an agent can make choices and plan, using decision theory, decision analysis,[144] and information value theory.[59] These tools include models such as Markov decision processes,[145] dynamic decision networks,[143] game theory and mechanism design.[146]

Classifiers and statistical learning methods[edit]

The simplest AI applications can be divided into two types: classifiers ("if shiny then diamond") and controllers ("if shiny then pick up"). Controllers do, however, also classify conditions before inferring actions, and therefore classification forms a central part of many AI systems. Classifiers are functions that use pattern matching to determine a closest match. They can be tuned according to examples, making them very attractive for use in AI. These examples are known as observations or patterns. In supervised learning, each pattern belongs to a certain predefined class. A class can be seen as a decision that has to be made. All the observations combined with their class labels are known as a data set. When a new observation is received, that observation is classified based on previous experience.[147]

A classifier can be trained in various ways; there are many statistical and machine learning approaches. The most widely used classifiers are the neural network,[148] kernel methods such as the support vector machine,[149] k-nearest neighbor algorithm,[150] Gaussian mixture model,[151] naive Bayes classifier,[152] and decision tree.[153] The performance of these classifiers have been compared over a wide range of tasks. Classifier performance depends greatly on the characteristics of the data to be classified. There is no single classifier that works best on all given problems; this is also referred to as the "no free lunch" theorem. Determining a suitable classifier for a given problem is still more an art than science.[154]

Neural networks[edit]

The study of non-learning artificial neural networks[148] began in the decade before the field of AI research was founded, in the work of Walter Pitts and Warren McCullouch. Frank Rosenblatt invented the perceptron, a learning network with a single layer, similar to the old concept of linear regression. Early pioneers also include Alexey Grigorevich Ivakhnenko, Teuvo Kohonen, Stephen Grossberg, Kunihiko Fukushima, Christoph von der Malsburg, David Willshaw, Shun-Ichi Amari, Bernard Widrow, John Hopfield, Eduardo R. Caianiello, and others.

The main categories of networks are acyclic or feedforward neural networks (where the signal passes in only one direction) and recurrent neural networks (which allow feedback and short-term memories of previous input events). Among the most popular feedforward networks are perceptrons, multi-layer perceptrons and radial basis networks.[155] Neural networks can be applied to the problem of intelligent control (for robotics) or learning, using such techniques as Hebbian learning, GMDH or competitive learning.[156]

Today, neural networks are often trained by the backpropagation algorithm, which had been around since 1970 as the reverse mode of automatic differentiation published by Seppo Linnainmaa,[157][158] and was introduced to neural networks by Paul Werbos.[159][160][161]

Hierarchical temporal memory is an approach that models some of the structural and algorithmic properties of the neocortex.[162]

Deep feedforward neural networks[edit]

Deep learning in artificial neural networks with many layers has transformed many important subfields of artificial intelligence, including computer vision, speech recognition, natural language processing and others.[163][164][165]

According to a survey,[166] the expression "Deep Learning" was introduced to the Machine Learning community by Rina Dechter in 1986[167] and gained traction after Igor Aizenberg and colleagues introduced it to Artificial Neural Networks in 2000.[168] The first functional Deep Learning networks were published by Alexey Grigorevich Ivakhnenko and V. G. Lapa in 1965.[169][page needed] These networks are trained one layer at a time. Ivakhnenko's 1971 paper[170] describes the learning of a deep feedforward multilayer perceptron with eight layers, already much deeper than many later networks. In 2006, a publication by Geoffrey Hinton and Ruslan Salakhutdinov introduced another way of pre-training many-layered feedforward neural networks (FNNs) one layer at a time, treating each layer in turn as an unsupervised restricted Boltzmann machine, then using supervised backpropagation for fine-tuning.[171] Similar to shallow artificial neural networks, deep neural networks can model complex non-linear relationships. Over the last few years, advances in both machine learning algorithms and computer hardware have led to more efficient methods for training deep neural networks that contain many layers of non-linear hidden units and a very large output layer.[172]

Deep learning often uses convolutional neural networks (CNNs), whose origins can be traced back to the Neocognitron introduced by Kunihiko Fukushima in 1980.[173] In 1989, Yann LeCun and colleagues applied backpropagation to such an architecture. In the early 2000s, in an industrial application CNNs already processed an estimated 10% to 20% of all the checks written in the US.[174] Since 2011, fast implementations of CNNs on GPUs have won many visual pattern recognition competitions.[165]

Deep feedforward neural networks were used in conjunction with reinforcement learning by AlphaGo, Google Deepmind's program that was the first to beat a professional human player.[175]

Deep recurrent neural networks[edit]

Early on, deep learning was also applied to sequence learning with recurrent neural networks (RNNs)[176] which are general computers and can run arbitrary programs to process arbitrary sequences of inputs. The depth of an RNN is unlimited and depends on the length of its input sequence.[165] RNNs can be trained by gradient descent[177][178][179] but suffer from the vanishing gradient problem.[163][180] In 1992, it was shown that unsupervised pre-training of a stack of recurrent neural networks can speed up subsequent supervised learning of deep sequential problems.[181]

Numerous researchers now use variants of a deep learning recurrent NN called the long short-term memory (LSTM) network published by Hochreiter & Schmidhuber in 1997.[182] LSTM is often trained by Connectionist Temporal Classification (CTC).[183] At Google, Microsoft and Baidu this approach has revolutionised speech recognition.[184][185][186] For example, in 2015, Google's speech recognition experienced a dramatic performance jump of 49% through CTC-trained LSTM, which is now available through Google Voice to billions of smartphone users.[187] Google also used LSTM to improve machine translation,[188] Language Modeling[189] and Multilingual Language Processing.[190] LSTM combined with CNNs also improved automatic image captioning[191] and a plethora of other applications.

Control theory[edit]

Control theory, the grandchild of cybernetics, has many important applications, especially in robotics.[192]

Languages[edit]

AI researchers have developed several specialized languages for AI research, including Lisp[193] and Prolog.[194]

Evaluating progress[edit]

In 1950, Alan Turing proposed a general procedure to test the intelligence of an agent now known as the Turing test. This procedure allows almost all the major problems of artificial intelligence to be tested. However, it is a very difficult challenge and at present all agents fail.[195]

Artificial intelligence can also be evaluated on specific problems such as small problems in chemistry, hand-writing recognition and game-playing. Such tests have been termed subject matter expert Turing tests. Smaller problems provide more achievable goals and there are an ever-increasing number of positive results.[196]

One classification for outcomes of an AI test is:[197][better source needed]

- Optimal: it is not possible to perform better.

- Strong super-human: performs better than all humans.

- Super-human: performs better than most humans.

- Sub-human: performs worse than most humans.

For example, performance at draughts (i.e. checkers) is optimal,[198] performance at chess is super-human and nearing strong super-human (see computer chess: computers versus human) and performance at many everyday tasks (such as recognizing a face or crossing a room without bumping into something) is sub-human.

A quite different approach measures machine intelligence through tests which are developed from mathematical definitions of intelligence. Examples of these kinds of tests start in the late nineties devising intelligence tests using notions from Kolmogorov complexity and data compression.[199] Two major advantages of mathematical definitions are their applicability to nonhuman intelligences and their absence of a requirement for human testers.

A derivative of the Turing test is the Completely Automated Public Turing test to tell Computers and Humans Apart (CAPTCHA). As the name implies, this helps to determine that a user is an actual person and not a computer posing as a human. In contrast to the standard Turing test, CAPTCHA administered by a machine and targeted to a human as opposed to being administered by a human and targeted to a machine. A computer asks a user to complete a simple test then generates a grade for that test. Computers are unable to solve the problem, so correct solutions are deemed to be the result of a person taking the test. A common type of CAPTCHA is the test that requires the typing of distorted letters, numbers or symbols that appear in an image undecipherable by a computer.[200]

Applications[edit]

AI is relevant to any intellectual task.[201] Modern artificial intelligence techniques are pervasive and are too numerous to list here. Frequently, when a technique reaches mainstream use, it is no longer considered artificial intelligence; this phenomenon is described as the AI effect.[202]

High-profile examples of AI include autonomous vehicles (such as drones and self-driving cars), medical diagnosis, creating art (such as poetry), proving mathematical theorems, playing games (such as Chess or Go), search engines (such as Google search), online assistants (such as Siri), image recognition in photographs, spam filtering, prediction of judicial decisions[203] and targeting online advertisements.[201][204][205]

With social media sites overtaking TV as a source for news for young people and news organisations increasingly reliant on social media platforms for generating distribution,[206] major publishers now use artificial intelligence (AI) technology to post stories more effectively and generate higher volumes of traffic.[207]

Competitions and prizes[

There are a number of competitions and prizes to promote research in artificial intelligence. The main areas promoted are: general machine intelligence, conversational behavior, data-mining, robotic cars, robot soccer and games.

Shaping the healthcare industry

Artificial intelligence is breaking into the healthcare industry by assisting doctors. According to Bloomberg Technology, Microsoft has developed AI to help doctors find the right treatments for cancer.[208] There is a great amount of research and drugs developed relating to cancer. In detail, there are more than 800 medicines and vaccines to treat cancer. This negatively affects the doctors, because there are way too many options to choose from, making it more difficult to choose the right drugs for the patients. Microsoft is working on a project to develop a machine called "Hanover". Its goal is to memorize all the papers necessary to cancer and help predict which combinations of drugs will be most effective for each patient. One project that is being worked on at the moment is fighting myeloid leukemia, a fatal cancer where the treatment has not improved in decades. Another study was reported to have found that artificial intelligence was as good as trained doctors in identifying skin cancers.[209]

According to CNN, there was a recent study by surgeons at the Children's National Medical Center in Washington. They successfully practiced a surgeon with a robot, rather than a human. The team supervised an autonomous robot performing a soft-tissue surgery, stitching together a pig's bowel during open surgery, and doing so better than a human surgeon.[210]

Automotive industry

Advancements in AI have contributed to the growth of the automotive industry through the creation and evolution of self-driving vehicles. As of 2016, there are over 30 companies utilizing AI into the creation of driverless cars. A few companies involved with AI include Tesla, Google, and Apple.[211]

Many components contribute to the functioning of self-driving cars. These vehicles incorporate systems such as braking, lane changing, collision prevention, navigation and mapping. Together, these systems, as well as high performance computers are integrated into one complex vehicle.[212]

One main factor that influences the ability for a driver-less car to function is mapping. In general, the vehicle would be pre-programmed with a map of the area being driven. This map would include data on the approximations of street light and curb heights in order for the vehicle to be aware of its surroundings. However, Google has been working on an algorithm with the purpose of eliminating the need for pre-programmed maps and instead, creating a device that would be able to adjust to a variety of new surroundings.[213] Some self-driving cars are not equipped with steering wheels or brakes, so there has also been research focused on creating an algorithm that is capable of maintaining a safe environment for the passengers in the vehicle through awareness of speed and driving conditions.[214]

Platforms

A platform (or "computing platform") is defined as "some sort of hardware architecture or software framework (including application frameworks), that allows software to run". As Rodney Brooks pointed out many years ago,[215] it is not just the artificial intelligence software that defines the AI features of the platform, but rather the actual platform itself that affects the AI that results, i.e., there needs to be work in AI problems on real-world platforms rather than in isolation.

A wide variety of platforms has allowed different aspects of AI to develop, ranging from expert systems such as Cyc to deep-learning frameworks to robot platforms such as the Roomba with open interface.[216] Recent advances in deep artificial neural networks and distributed computing have led to a proliferation of software libraries, including Deeplearning4j, TensorFlow, Theano and Torch.

Partnership on AI

Amazon, Google, Facebook, IBM, and Microsoft have established a non-profit partnership to formulate best practices on artificial intelligence technologies, advance the public's understanding, and to serve as a platform about artificial intelligence.[217] They stated: "This partnership on AI will conduct research, organize discussions, provide thought leadership, consult with relevant third parties, respond to questions from the public and media, and create educational material that advance the understanding of AI technologies including machine perception, learning, and automated reasoning."[217] Apple joined other tech companies as a founding member of the Partnership on AI in January 2017. The corporate members will make financial and research contributions to the group, while engaging with the scientific community to bring academics onto the board.[218]

Philosophy and ethics

There are three philosophical questions related to AI:

- Is artificial general intelligence possible? Can a machine solve any problem that a human being can solve using intelligence? Or are there hard limits to what a machine can accomplish?

- Are intelligent machines dangerous? How can we ensure that machines behave ethically and that they are used ethically?

- Can a machine have a mind, consciousness and mental states in exactly the same sense that human beings do? Can a machine be sentient, and thus deserve certain rights? Can a machine intentionally cause harm?

The limits of artificial general intelligence[edit]

Can a machine be intelligent? Can it "think"?

- Turing's "polite convention"

- We need not decide if a machine can "think"; we need only decide if a machine can act as intelligently as a human being. This approach to the philosophical problems associated with artificial intelligence forms the basis of the Turing test.[195]

- The Dartmouth proposal

- "Every aspect of learning or any other feature of intelligence can be so precisely described that a machine can be made to simulate it." This conjecture was printed in the proposal for the Dartmouth Conference of 1956, and represents the position of most working AI researchers.[219]

- Newell and Simon's physical symbol system hypothesis

- "A physical symbol system has the necessary and sufficient means of general intelligent action." Newell and Simon argue that intelligence consists of formal operations on symbols.[220] Hubert Dreyfus argued that, on the contrary, human expertise depends on unconscious instinct rather than conscious symbol manipulation and on having a "feel" for the situation rather than explicit symbolic knowledge. (See Dreyfus' critique of AI.)[221][222]

- Gödelian arguments

- Gödel himself,[223] John Lucas (in 1961) and Roger Penrose (in a more detailed argument from 1989 onwards) made highly technical arguments that human mathematicians can consistently see the truth of their own "Gödel statements" and therefore have computational abilities beyond that of mechanical Turing machines.[224] However, the modern consensus in the scientific and mathematical community is that these "Gödelian arguments" fail.[225][226][227]

- The artificial brain argument

- The brain can be simulated by machines and because brains are intelligent, simulated brains must also be intelligent; thus machines can be intelligent. Hans Moravec, Ray Kurzweil and others have argued that it is technologically feasible to copy the brain directly into hardware and software, and that such a simulation will be essentially identical to the original.[93]

- The AI effect

- Machines are already intelligent, but observers have failed to recognize it. When Deep Blue beat Garry Kasparov in chess, the machine was acting intelligently. However, onlookers commonly discount the behavior of an artificial intelligence program by arguing that it is not "real" intelligence after all; thus "real" intelligence is whatever intelligent behavior people can do that machines still can not. This is known as the AI Effect: "AI is whatever hasn't been done yet."

Potential risks and moral reasoning[edit]

Widespread use of artificial intelligence could have unintended consequences that are dangerous or undesirable. Scientists from the Future of Life Institute, among others, described some short-term research goals to be how AI influences the economy, the laws and ethics that are involved with AI and how to minimize AI security risks. In the long-term, the scientists have proposed to continue optimizing function while minimizing possible security risks that come along with new technologies.[228]

Machines with intelligence have the potential to use their intelligence to make ethical decisions. Research in this area includes "machine ethics", "artificial moral agents", and the study of "malevolent vs. friendly AI".

Existential risk[edit]

The development of full artificial intelligence could spell the end of the human race. Once humans develop artificial intelligence, it will take off on its own and redesign itself at an ever-increasing rate. Humans, who are limited by slow biological evolution, couldn't compete and would be superseded.

A common concern about the development of artificial intelligence is the potential threat it could pose to mankind. This concern has recently gained attention after mentions by celebrities including Stephen Hawking, Bill Gates,[230] and Elon Musk.[231] A group of prominent tech titans including Peter Thiel, Amazon Web Services and Musk have committed $1billion to OpenAI a nonprofit company aimed at championing responsible AI development.[232] The opinion of experts within the field of artificial intelligence is mixed, with sizable fractions both concerned and unconcerned by risk from eventual superhumanly-capable AI.[233]

In his book Superintelligence, Nick Bostrom provides an argument that artificial intelligence will pose a threat to mankind. He argues that sufficiently intelligent AI, if it chooses actions based on achieving some goal, will exhibit convergent behavior such as acquiring resources or protecting itself from being shut down. If this AI's goals do not reflect humanity's - one example is an AI told to compute as many digits of pi as possible - it might harm humanity in order to acquire more resources or prevent itself from being shut down, ultimately to better achieve its goal.

For this danger to be realized, the hypothetical AI would have to overpower or out-think all of humanity, which a minority of experts argue is a possibility far enough in the future to not be worth researching.[234][235] Other counterarguments revolve around humans being either intrinsically or convergently valuable from the perspective of an artificial intelligence.[236]

Concern over risk from artificial intelligence has led to some high-profile donations and investments. In January 2015, Elon Musk donated ten million dollars to the Future of Life Institute to fund research on understanding AI decision making. The goal of the institute is to "grow wisdom with which we manage" the growing power of technology. Musk also funds companies developing artificial intelligence such as Google DeepMind and Vicarious to "just keep an eye on what's going on with artificial intelligence.[237] I think there is potentially a dangerous outcome there."[238][239]

Development of militarized artificial intelligence is a related concern. Currently, 50+ countries are researching battlefield robots, including the United States, China, Russia, and the United Kingdom. Many people concerned about risk from superintelligent AI also want to limit the use of artificial soldiers.[240]

Devaluation of humanity[edit]

Joseph Weizenbaum wrote that AI applications can not, by definition, successfully simulate genuine human empathy and that the use of AI technology in fields such as customer service or psychotherapy[241] was deeply misguided. Weizenbaum was also bothered that AI researchers (and some philosophers) were willing to view the human mind as nothing more than a computer program (a position now known as computationalism). To Weizenbaum these points suggest that AI research devalues human life.[242]

Decrease in demand for human labor[edit]

Martin Ford, author of The Lights in the Tunnel: Automation, Accelerating Technology and the Economy of the Future,[243] and others argue that specialized artificial intelligence applications, robotics and other forms of automation will ultimately result in significant unemployment as machines begin to match and exceed the capability of workers to perform most routine and repetitive jobs. Ford predicts that many knowledge-based occupations—and in particular entry level jobs—will be increasingly susceptible to automation via expert systems, machine learning[244] and other AI-enhanced applications. AI-based applications may also be used to amplify the capabilities of low-wage offshore workers, making it more feasible to outsource knowledge work.[245][page needed]

Artificial moral agents[edit]

This raises the issue of how ethically the machine should behave towards both humans and other AI agents. This issue was addressed by Wendell Wallach in his book titled Moral Machines in which he introduced the concept of artificial moral agents (AMA).[246] For Wallach, AMAs have become a part of the research landscape of artificial intelligence as guided by its two central questions which he identifies as "Does Humanity Want Computers Making Moral Decisions"[247] and "Can (Ro)bots Really Be Moral".[248] For Wallach the question is not centered on the issue of whether machines can demonstrate the equivalent of moral behavior in contrast to the constraints which society may place on the development of AMAs.[249]

Machine ethics

The field of machine ethics is concerned with giving machines ethical principles, or a procedure for discovering a way to resolve the ethical dilemmas they might encounter, enabling them to function in an ethically responsible manner through their own ethical decision making.[250] The field was delineated in the AAAI Fall 2005 Symposium on Machine Ethics: "Past research concerning the relationship between technology and ethics has largely focused on responsible and irresponsible use of technology by human beings, with a few people being interested in how human beings ought to treat machines. In all cases, only human beings have engaged in ethical reasoning. The time has come for adding an ethical dimension to at least some machines. Recognition of the ethical ramifications of behavior involving machines, as well as recent and potential developments in machine autonomy, necessitate this. In contrast to computer hacking, software property issues, privacy issues and other topics normally ascribed to computer ethics, machine ethics is concerned with the behavior of machines towards human users and other machines. Research in machine ethics is key to alleviating concerns with autonomous systems—it could be argued that the notion of autonomous machines without such a dimension is at the root of all fear concerning machine intelligence. Further, investigation of machine ethics could enable the discovery of problems with current ethical theories, advancing our thinking about Ethics."[251] Machine ethics is sometimes referred to as machine morality, computational ethics or computational morality. A variety of perspectives of this nascent field can be found in the collected edition "Machine Ethics"[250] that stems from the AAAI Fall 2005 Symposium on Machine Ethics.[251]

Malevolent and friendly AI[

Political scientist Charles T. Rubin believes that AI can be neither designed nor guaranteed to be benevolent.[252] He argues that "any sufficiently advanced benevolence may be indistinguishable from malevolence." Humans should not assume machines or robots would treat us favorably, because there is no a priori reason to believe that they would be sympathetic to our system of morality, which has evolved along with our particular biology (which AIs would not share). Hyper-intelligent software may not necessarily decide to support the continued existence of mankind, and would be extremely difficult to stop. This topic has also recently begun to be discussed in academic publications as a real source of risks to civilization, humans, and planet Earth.

Physicist Stephen Hawking, Microsoft founder Bill Gates and SpaceX founder Elon Musk have expressed concerns about the possibility that AI could evolve to the point that humans could not control it, with Hawking theorizing that this could "spell the end of the human race".[253]

One proposal to deal with this is to ensure that the first generally intelligent AI is 'Friendly AI', and will then be able to control subsequently developed AIs. Some question whether this kind of check could really remain in place.

Leading AI researcher Rodney Brooks writes, "I think it is a mistake to be worrying about us developing malevolent AI anytime in the next few hundred years. I think the worry stems from a fundamental error in not distinguishing the difference between the very real recent advances in a particular aspect of AI, and the enormity and complexity of building sentient volitional intelligence."[254]

Machine consciousness, sentience and mind

If an AI system replicates all key aspects of human intelligence, will that system also be sentient – will it have a mind which has conscious experiences? This question is closely related to the philosophical problem as to the nature of human consciousness, generally referred to as the hard problem of consciousness.

Consciousness[

Computationalism and functionalism

Computationalism is the position in the philosophy of mind that the human mind or the human brain (or both) is an information processing system and that thinking is a form of computing.[255] Computationalism argues that the relationship between mind and body is similar or identical to the relationship between software and hardware and thus may be a solution to the mind-body problem. This philosophical position was inspired by the work of AI researchers and cognitive scientists in the 1960s and was originally proposed by philosophers Jerry Fodor and Hilary Putnam.

Strong AI hypothesis

The philosophical position that John Searle has named "strong AI" states: "The appropriately programmed computer with the right inputs and outputs would thereby have a mind in exactly the same sense human beings have minds."[256] Searle counters this assertion with his Chinese room argument, which asks us to look inside the computer and try to find where the "mind" might be.[257]

Robot rights

Mary Shelley's Frankenstein considers a key issue in the ethics of artificial intelligence: if a machine can be created that has intelligence, could it also feel? If it can feel, does it have the same rights as a human? The idea also appears in modern science fiction, such as the film A.I.: Artificial Intelligence, in which humanoid machines have the ability to feel emotions. This issue, now known as "robot rights", is currently being considered by, for example, California's Institute for the Future, although many critics believe that the discussion is premature.[258] The subject is profoundly discussed in the 2010 documentary film Plug & Pray.[259]

Superintelligence

Are there limits to how intelligent machines – or human-machine hybrids – can be? A superintelligence, hyperintelligence, or superhuman intelligence is a hypothetical agent that would possess intelligence far surpassing that of the brightest and most gifted human mind. ‘’Superintelligence’’ may also refer to the form or degree of intelligence possessed by such an agent.

Technological singularity

If research into Strong AI produced sufficiently intelligent software, it might be able to reprogram and improve itself. The improved software would be even better at improving itself, leading to recursive self-improvement.[260] The new intelligence could thus increase exponentially and dramatically surpass humans. Science fiction writer Vernor Vinge named this scenario "singularity".[261] Technological singularity is when accelerating progress in technologies will cause a runaway effect wherein artificial intelligence will exceed human intellectual capacity and control, thus radically changing or even ending civilization. Because the capabilities of such an intelligence may be impossible to comprehend, the technological singularity is an occurrence beyond which events are unpredictable or even unfathomable.[261]

Ray Kurzweil has used Moore's law (which describes the relentless exponential improvement in digital technology) to calculate that desktop computers will have the same processing power as human brains by the year 2029, and predicts that the singularity will occur in 2045.[261]

Transhumanism

You awake one morning to find your brain has another lobe functioning. Invisible, this auxiliary lobe answers your questions with information beyond the realm of your own memory, suggests plausible courses of action, and asks questions that help bring out relevant facts. You quickly come to rely on the new lobe so much that you stop wondering how it works. You just use it. This is the dream of artificial intelligence.

Robot designer Hans Moravec, cyberneticist Kevin Warwick and inventor Ray Kurzweil have predicted that humans and machines will merge in the future into cyborgs that are more capable and powerful than either.[263] This idea, called transhumanism, which has roots in Aldous Huxley and Robert Ettinger, has been illustrated in fiction as well, for example in the manga Ghost in the Shell and the science-fiction series Dune.

In the 1980s artist Hajime Sorayama's Sexy Robots series were painted and published in Japan depicting the actual organic human form with lifelike muscular metallic skins and later "the Gynoids" book followed that was used by or influenced movie makers including George Lucas and other creatives. Sorayama never considered these organic robots to be real part of nature but always unnatural product of the human mind, a fantasy existing in the mind even when realized in actual form.

Edward Fredkin argues that "artificial intelligence is the next stage in evolution", an idea first proposed by Samuel Butler's "Darwin among the Machines" (1863), and expanded upon by George Dyson in his book of the same name in 1998.[264]

In fiction

Thought-capable artificial beings have appeared as storytelling devices since antiquity.[13]

The implications of a constructed machine exhibiting artificial intelligence have been a persistent theme in science fiction since the twentieth century. Early stories typically revolved around intelligent robots. The word "robot" itself was coined by Karel Čapek in his 1921 play R.U.R., the title standing for "Rossum's Universal Robots". Later, the SF writer Isaac Asimov developed the Three Laws of Robotics which he subsequently explored in a long series of robot stories. Asimov's laws are often brought up during layman discussions of machine ethics;[265] while almost all artificial intelligence researchers are familiar with Asimov's laws through popular culture, they generally consider the laws useless for many reasons, one of which is their ambiguity.[266]

The novel Do Androids Dream of Electric Sheep?, by Philip K. Dick, tells a science fiction story about Androids and humans clashing in a futuristic world. Elements of artificial intelligence include the empathy box, mood organ, and the androids themselves. Throughout the novel, Dick portrays the idea that human subjectivity is altered by technology created with artificial intelligence.[267]

Nowadays AI is firmly rooted in popular culture; intelligent robots appear in innumerable works. HAL, the murderous computer in charge of the spaceship in 2001: A Space Odyssey (1968), is an example of the common "robotic rampage" archetype in science fiction movies. The Terminator (1984) and The Matrix (1999) provide additional widely familiar examples. In contast, the rare loyal robots such as Gort from The Day the Earth Stood Still (1951) and Bishop from Aliens (1986) are less prominent in popular culture.[268]

See also

- Glossary of artificial intelligence

- Abductive reasoning

- Case-based reasoning

- Commonsense reasoning

- Emergent algorithm

- Soft computing

- Machine learning

- Evolutionary computing

Notes

- ^ Jump up to: a b The intelligent agent paradigm:

- Russell & Norvig 2003, pp. 27, 32–58, 968–972

- Poole, Mackworth & Goebel 1998, pp. 7–21

- Luger & Stubblefield 2004, pp. 235–240

- Hutter 2005, pp. 125–126

- Jump up ^ Russell & Norvig 2009, p. 2.

- Jump up ^ Schank, Roger C. (1991). "Where's the AI". AI magazine. Vol. 12 no. 4. p. 38.